A Performance Marketer's Framework for Testing Creator Ads

A 5-step framework for testing creator ads like paid: hypothesis-first briefs, isolated variables, dedicated test campaigns, and a documented learning loop.

Paid social has evolved over the last two years. As targeting signals have weakened, creative has become the lever doing the most work, driving 56% of an ad campaign's sales lift. And 77% of marketers are now sourcing that creative from creators, with usage rights baked into influencer marketing contracts from the get-go.

With creator content this central to the paid mix, testing and optimizing it has become one of the most important processes in any paid program. Below, we put together a 5-step framework to building a strong testing program. Here's how it runs, end to end.

Why do creator-led ads need a different testing approach?

Creator content behaves differently from brand creative once it hits Ads Manager, and the testing framework has to account for that.

Just take a look at these performance patterns:

- Creator content drives up to 4x higher click-through rates than traditional studio assets, with significantly lower cost-per-click. But this lift only holds when there's enough variety in the test set to optimize against.

- Top-spending accounts ship 12 to 19+ creatives per week, while mid-tier accounts ship 6 to 7. On a monthly basis, that's the gap between 20 to 50 fresh creatives from top performers and 3 to 5 from everyone else, and it correlates almost perfectly with paid social ROAS at scale.

- Meta's Andromeda algorithm update explicitly rewards radical creative variation, evaluating thousands of times more ad permutations in parallel than its predecessor and penalizing accounts where all the ads look alike. If your creator ads are 50 versions of the same hook, the algorithm picks one and starves the rest.

What does this mean for marketers? Creator content is high-leverage, but only when it enters the ad account with a structured testing system around it. Volume without variety doesn't work, and variety without rigor doesn't either.

The 5-step framework for testing creator ads

The workflow below is how we've seen brands scale running creator ads at meaningful budget levels, distilled into five repeatable steps. Let’s get started.

Step 1: Write the hypothesis before you write the brief

Every test starts with a hypothesis, written down before any creator is contacted. A clean hypothesis names the variable being tested, the expected direction of the result, and the reasoning behind it.

For example: "First-person POV hooks will outperform problem-solution hooks for our serum category by at least 25% on CTR, because our top three organic posts all use POV framing."

That single sentence forces a sharper brief, a cleaner test setup, and a real conclusion at the end. Without it, the post-mortem becomes a Slack thread guessing at why a piece of content underperformed.

Step 2: Isolate one variable per test cell

Creator ads have at least four moving variables: the creator, the hook, the angle, and the format. A test that changes all four at once between cells produces no usable learning. So, pick the variable being tested and hold the others constant.

For example:

- Testing creators? Same hook, same angle, same format, different person on camera.

- Testing hooks? Same creator, same angle, same format, three different opening lines.

- Testing angles? Same creator, same format, same hook structure, three different value propositions (save time vs. save money vs. solve a specific pain point).

- Testing formats? Same creator, same script, cut for Reels, Stories, and feed.

Testing one variable at a time will allow you to produce real learnings instead of just more output. Clean single-variable tests also cost less to run, because they’re smaller sample sizes than multivariate ones. The signal isn't getting buried in noise, so the learning lands faster and sharper.

Step 3: Brief for intentional variety, not raw volume

When it comes to briefing creators, you want to give them enough guidance to produce the content variety you need, while still leaving them room for the creative freedom that makes their content feel authentic.

If your brief is too loose, and 10 creators may come back with nearly identical executions of the same "authentic content" prompt. If it’s too tight, and the content reads like a corporate ad, which is what creator content is supposed to avoid in the first place.

Design the variety at the variable level instead. In other words, different creators get assigned to different variables in the test: one films a question hook, one films a POV reveal, one anchors on the time-saving angle, one anchors on the affordability angle. Each creator gets clear direction on which variable they own, and full creative latitude on setting, delivery, scene composition, props, and pacing. That's where their knowledge of their own audience does the most work, and it's the part the brand has no business writing for them.

Your brief should include:

- The hypothesis being tested, in plain language so each creator understands what their piece is supposed to learn

- The specific hook, angle, or format that creator is responsible for

- Format specs for vertical and square cuts so the asset works across placements

- Usage rights and ad permissions baked in from the start

- Creative freedom on everything else

As a result, you’ll get 5 to 10 distinct, on-brief variants from your creators that still feel authentic to those who made them. The test gets clean data because the variables are different across creators, and the content still performs because each piece feels native to the person on camera.

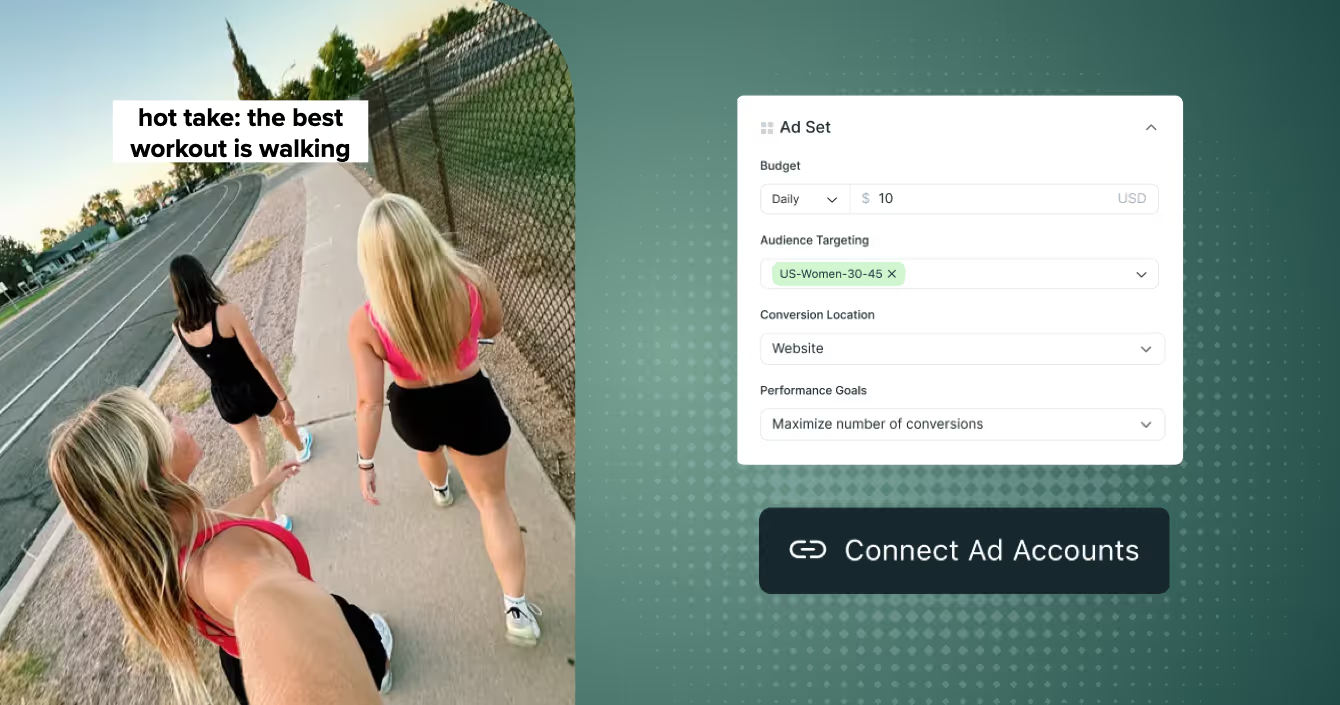

Step 4: Run a dedicated testing campaign separate from your scaled winners

New creator ads cannot fairly compete against your scaled top performers. The scaled ads have weeks of pixel data, accumulated social proof, and an audience the algorithm has already learned to serve them to. A new test ad has none of that, and even strong new creative will get suppressed in a head-to-head matchup against a fatigued-but-optimized incumbent.

Run a dedicated testing campaign with its own budget allocation. Here’s the breakdown most performance teams rely on:

- 70 to 80% of paid social budget on scaled winners in always-on campaigns

- 15 to 25% on the active testing campaign, with new creator ads cycling in every two weeks

- 5 to 10% on retesting and re-cuts, for assets that showed promise but didn't meet the graduation threshold the first time

New ads enter the testing campaign first. They graduate to the scaled campaigns only after clearing a pre-set performance threshold (CTR, ROAS, CPA, whichever metric was named in the hypothesis). That structure protects your scaled performance while keeping the learning loop alive.

Step 5: Set a duration floor and pre-commit to the success metric

Ensure you’re not ending your creative test too early, or moving the goalposts on the success metric after it's already running.

The practical duration floor is 7 to 14 days per test cell, with enough impressions to clear statistical noise. Day-of-week patterns, audience pacing, and platform pacing all need a full weekly cycle to show up cleanly.

For the success metric, pre-commit before the test launches, whether it’s CTR, ROAS, CPA, hook rate, or thumb-stop rate. Pick one, write it down, and judge the test against it. Otherwise the metric quietly drifts toward whatever happens to look favorable for the asset the team is already attached to, and the testing discipline collapses.

Before declaring any winner, the checklist runs:

- Did the test hit the impression floor?

- Did the metric stabilize or is it still swinging?

- Is the lift large enough to justify graduating the ad to a scaled campaign?

Bonus: Build a testing log

As a bonus last step, document your learnings somewhere your team will actually open again.

A simple testing log captures the hypothesis, the variables, the result, and a one-sentence learning for every test that runs. After a few tests, patterns start emerging that no individual test can show. After dozens of tests, you have a creative playbook specific to your category and audience that no external benchmark can match.

A workable log entry looks like this:

Test 14, April 2026.

- Hypothesis: POV hooks outperform problem-solution hooks for serum category.

- Variables held: same creator, same product, same format.

- Result: POV hooks drove 32% higher CTR over 10 days and 4,400 impressions per cell.

- Learning: lead with POV in next wave; queue problem-solution for retargeting tests only.

This will save the next person on the team a month of relearning the same lesson. Brands running creator ad testing programs for 12+ months without a log usually end up running the same hypothesis 3 times before noticing.

Best practices for briefing creators inside a testing framework

A few principles to layer in when briefing creators for content meant to be tested:

- Pay for the variants, not just the hero. A brief asking for 6 distinct executions deserves a different compensation conversation than a brief asking for 1. Pay accordingly and the variant quality follows.

- Show creators the test logic. Most creators have never been told what a creative test cell is. Walking them through the hypothesis produces sharper variants because they understand what each version is trying to do.

- Watch the first three seconds before anything else. The opening hook is the single biggest predictor of test outcomes on Reels, Shorts, and TikTok. Approve and reject variants on the strength of those three seconds alone.

- Brief in waves, not one-offs. Briefing five creators against a unified hypothesis produces a cleaner test set than briefing one creator five times. Variance across people is a feature.

- Share results back with creators. Most creators never see how their content performed as a paid ad. Sharing the numbers makes them better partners on the next wave and often surfaces creative tweaks you wouldn't have thought of.

From creator content to performance engine

A creator program with an ad testing framework around it will produce not only ads that perform better, but also an internal asset of brand-specific learnings (which hooks, angles, formats, and creator types convert for your category).

To get started, pick one hypothesis, brief one wave of variants against it, run a clean test cell, and write down what you learned.

Want to see how Aspire helps brands run creator ad testing programs at scale, across briefing, rights, and direct integrations with Meta Partnership Ads and TikTok Spark Ads? Book a demo with our team.